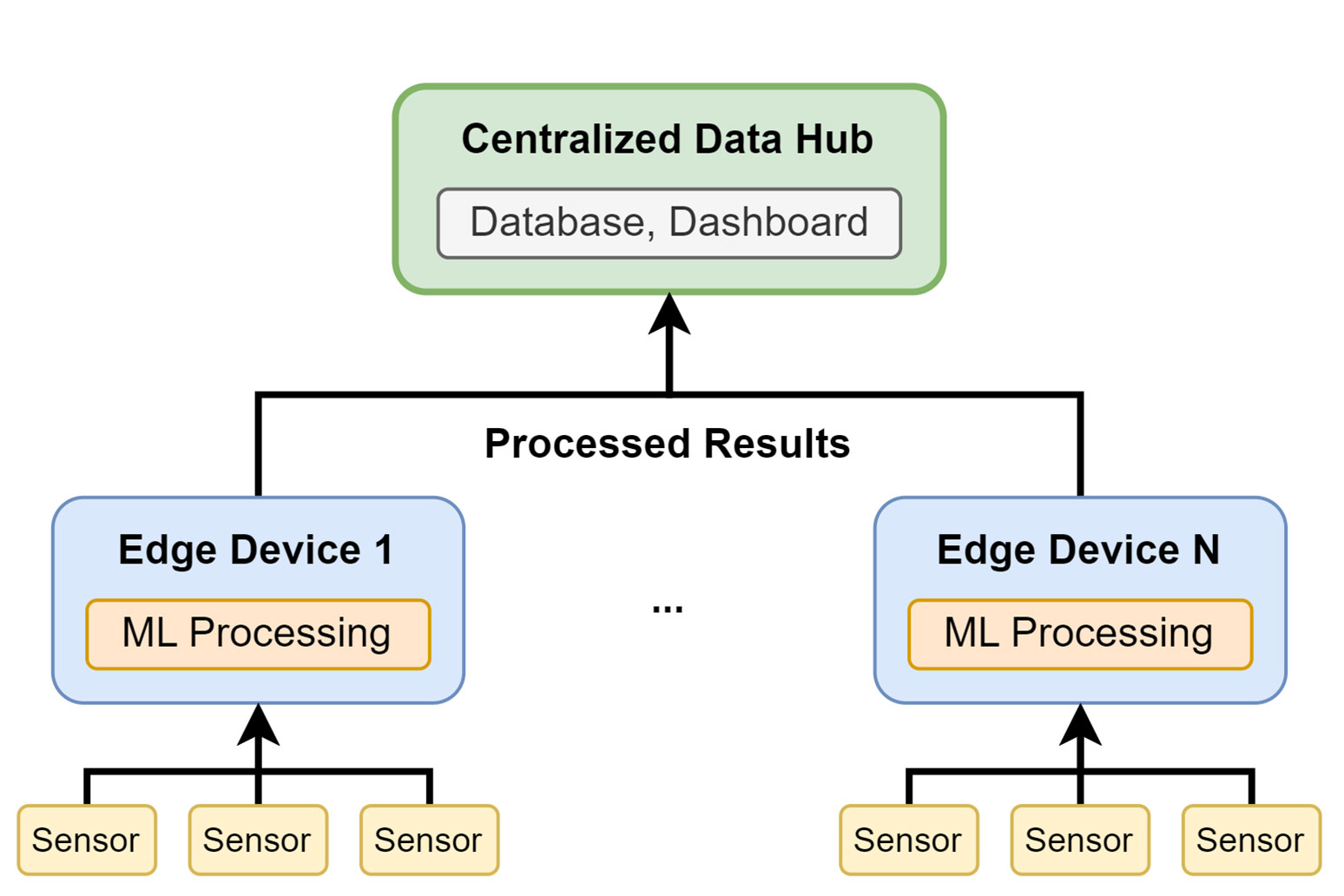

Edge AI combines edge computing and artificial intelligence (AI) methods to enable fast and precise data processing on edge devices. Designing an Edge AI solution for a given task requires experience in refining the technical requirements. The main challenge is to co-design the AI model, the software stack, and the embedded hardware of the edge device as a unified system.

Unleashing the Power of Edge AI: Exploring New Frontiers

Low latency through local data processing with Edge AI on edge devices

Embedded Machine Learning describes the execution of machine learning on such edge devices, whereas the description Tiny Machine Learning is used for systems that use microcontrollers with particularly limited memory and computational capabilities to enable battery-powered off-grid applications. [1] A wide range of computing power classes exist, with fluent transition between the different classes.

Edge AI can be challenging in comparison to classic server-side centralized AI, but it also comes with some benefits:

Reduced Latency

Environmental Impact

Edge AI can help reduce the environmental impact of AI. If the edge processes the data directly, energy-intensive data transmission is not required. Edge devices can be programmed to transmit raw data to online storage only if it is new or unexpected, or to transmit only relevant features of the data.

Data Privacy

The privacy of sensitive data can also be enhanced by using edge AI. When raw data is not transmitted to a central server, it remains local on each edge device. This means considerable reduction of security risks. The local datasets are however often not distributed identically and each edge may have individual statistical characteristics.

But it is possible to train machine learning models on the local edge devices using federated learning algorithms. Here, the models are trained locally and only the model parameters are sent to a global server, ensuring data privacy while still gaining knowledge from each edge device.

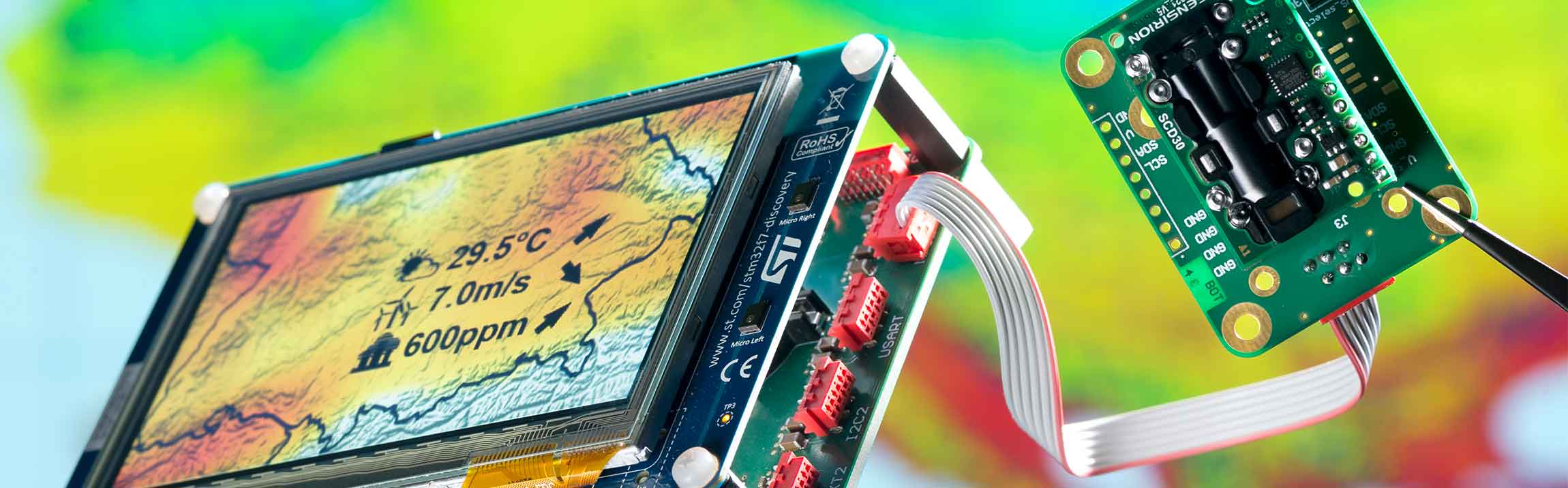

Edge AI Reference Design

Within a research project, the Fraunhofer EMFT group Machine Learning Enhanced Sensor Systems has developed an Edge AI reference platform in the AI in the sensor node project (KIS). It is a microcontroller based embedded hardware that enables generic sensor connectivity. It can be deployed either as a mains-powered device connected to a wired communications interface, or as a self-contained, battery-powered, low-power wireless communications device. This reference design includes a complete data pipeline with pre-processing and database connectivity. The server-side data pipeline is a containerized environment built using best practice open-source software packages. Depending on the task, the machine learning model can range from simple linear models to complex deep neural network architectures.

The deployment and inference of an AI model at the edge is limited by computing power. The use of methods such as quantization and pruning, which reduce the memory and computational requirements, must be evaluated in terms of their impact on model performance on the device. Server-side model development and training is often performed using Python, while the embedded software for the edge device, such as the machine learning inference engine, is programmed in C/C++. Therefore, it is essential to evaluate model performance directly on the edge device. [2]

[1] D. Situnayake und J. Plunkett, AI at the Edge. O'Reilly Media, Inc., 2023.

[2] F. Fraidling et al., "Tiny Machine Learning Sensor Platform for Local Sensor Data Fusion and Evaluation," in MikroSystemTechnik Kongress, Dresden, Deutschland, 2023.

Leverage our expert edge AI at Fraunhofer EMFT for your specific application needs. We are eager to collaborate and innovate with you - contact us!